It always bothers me when I can’t make something work that should work, so when I ran into a bit of a hurdle in my last post, I couldn’t “quit” for the day not knowing what I did wrong. I took care of my other obligations and went back at it.

The last error I received indicated there was still something wrong with my environment variables, and the installer for the Ryzen AI Software was trying to set up a Conda environment. I added the path to Conda’s scripts directory to my environment variables, and that didn’t solve the issue – that’s where I put the computer away earlier.

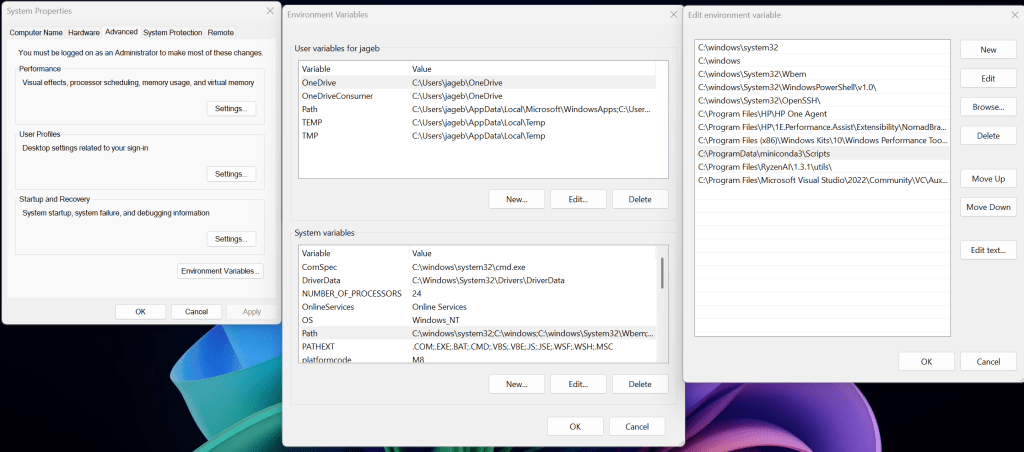

With a fresh mind, I realize I had set the environment variables for just my user, not the whole system. So I made the same entry for the system-wide environment variables, and that solved the issue:

And that served to bring me to my next error, which was basically a pip error… just a timeout when trying to download something, apparently:

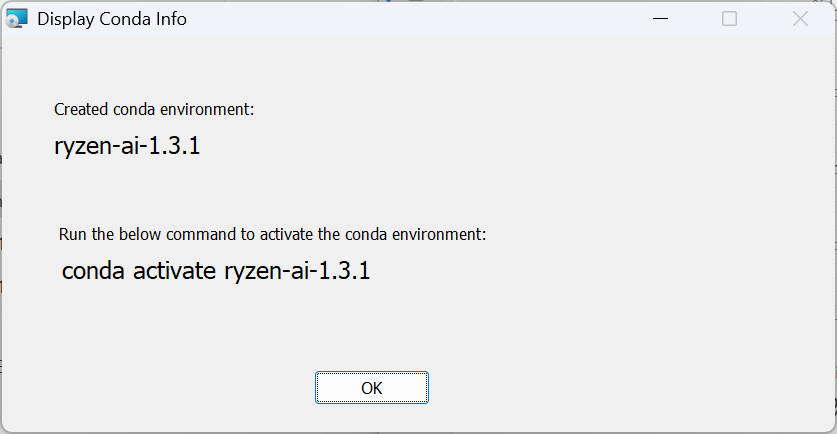

pip._vendor.urllib3.exceptions.ReadTimeoutError: HTTPSConnectionPool(host='files.pythonhosted.org', port=443): Read timed out.A quick Google search confirmed my gut reaction – retry it… the Internet is a wonderful thing, but there’s no guaranteed service level. So I reran the installer, and viola!

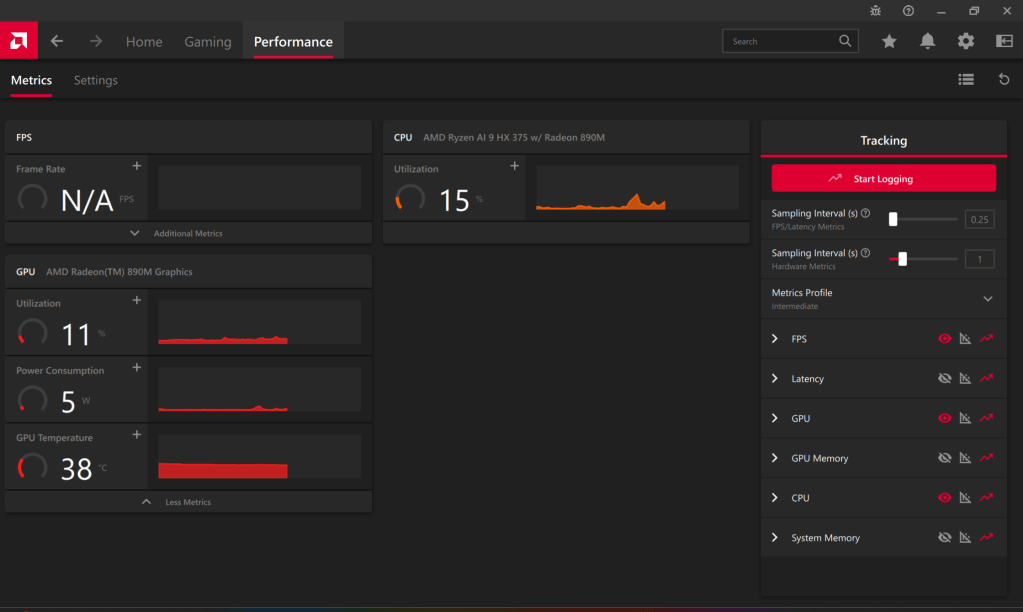

So back to the checklist on AMD’s website:

- NPU Drivers – check

- Ryzen AI 1.3 MSI installer – check

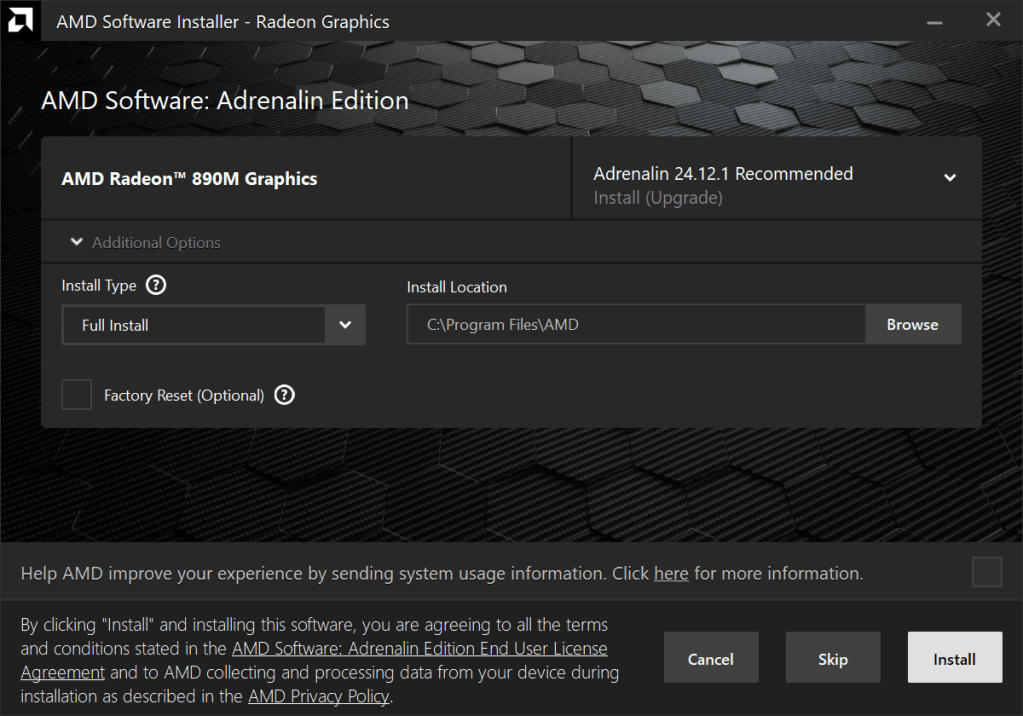

- Latest AMD GPU device driver – I thought I had the latest already, but apparently not:

Install everything? Sure, why not…

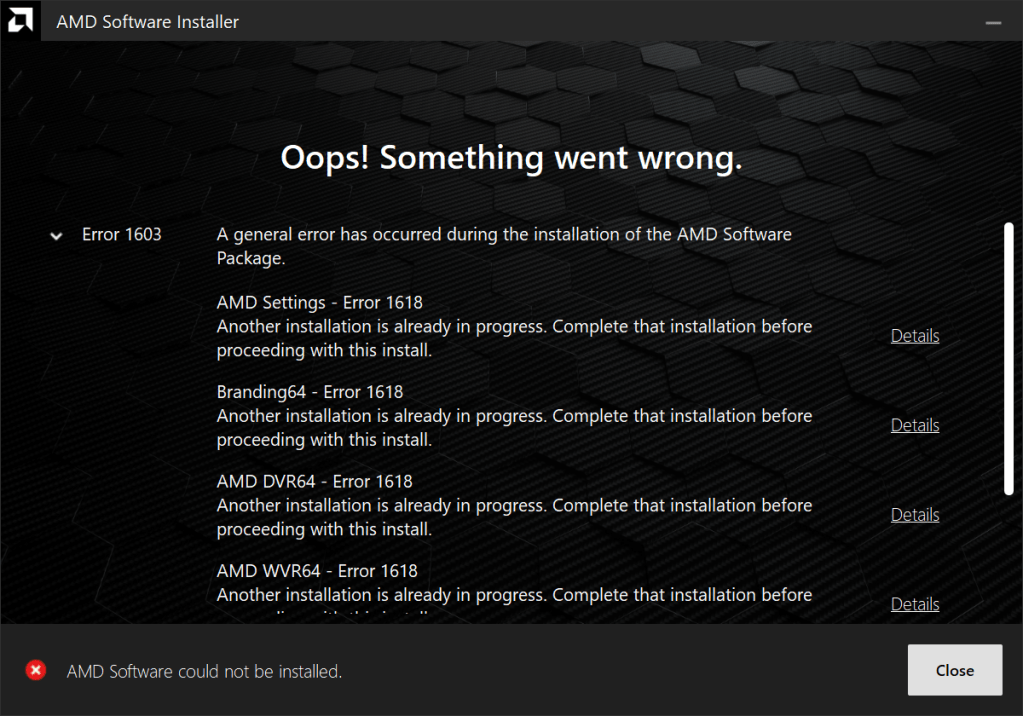

… and another error…

So I rebooted and tried again, and that seemed to do the trick…

On to the next bullet point, “Hybrid LLM artifacts for general LLMs” from AMD.com… I follow the link and log on, and get this…

Seriously, AMD? I’m just looking to play with some LLMs… not launch codes. Oh well… let’s try the next one for DeepSeek models…

Looks like the best place to start will be here, but, it’s late, I overcame the problem I had earlier… I think this will be my stopping point for now. Stay tuned for the next post – which may either be a continuation of this initiative, or may be a look into some python scripting I’ve started using for sending prompts to multiple models and comparing the output.

Leave a comment